AI Responsibility Maturity Audit

Faced with the regulatory challenges of the EU AI Act and the major risks associated with artificial intelligence—particularly misuse—Bureau Veritas supports you with a comprehensive maturity audit solution to assess and secure your generative AI systems, your AI projects, and your organizational capabilities. This approach is part of a transformation logic, tailored to the company’s role in the AI value chain and the competencies involved.

Anticipate regulatory compliance and strengthen stakeholder trust through a structured methodology.

What Is an AI Maturity Audit?

An AI maturity audit is a comprehensive, structured assessment of an organization’s ability to develop, govern, and effectively deploy artificial intelligence solutions—all within a transformation, skills development, and European AI Act compliance framework.

Bureau Veritas can support you as an independent trusted third party.

This audit examines several key domains: AI strategy, data governance, model quality, risk management, regulatory compliance, internal competencies, technical infrastructure, and key AI‑related skills. It evaluates the organization’s maturity on a progressive scale, identifying strengths to build on and areas that require priority improvement.

The AI maturity audit provides a clear roadmap to strengthen organizational capabilities and competencies, optimize technology investments, and support responsible and sustainable AI transformation.

Why Should You Be Supported in Your Responsible AI Maturity Audit?

A regulatory context with the EU AI Act

Effective since 1 August 2024, the EU AI Act imposes strict obligations on companies using AI systems and processing data, depending on the organization’s role—regardless of their use cases or AI projects.

Its application becomes mandatory from 2 August 2026, particularly for high‑risk AI systems and systems subject to transparency obligations on the data used.

With fines reaching up to 7%* of global revenue, compliance is an absolute necessity.

A complex challenge for companies

The figures speak for themselves:

- 68% of companies** struggle to interpret the EU AI Act and its impact on their AI projects, data, and internal organization.

- 72% of executives* believe their organizations are not prepared for the EU AI Act and its impacts.

- 60% of multinational corporations*** have not implemented the required AI and data governance frameworks aligned with their organizational role due to a lack of structured competencies.

Multiple risks to manage

AI systems present three major categories of risks, related to data, models, and business use cases:

- Malicious use: cyberattacks, disinformation, biological weapon development, abusive data exploitation

- Malfunctions: algorithmic bias, data‑related reliability errors, loss of control over autonomous systems

- Systemic risks: personal data protection and environmental impact

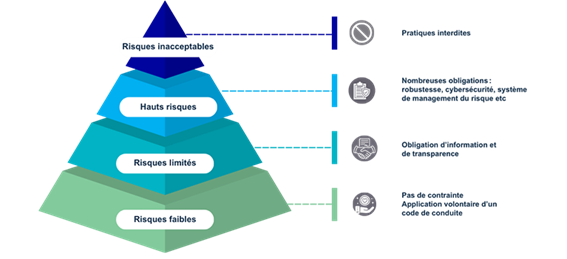

How Does the EU AI Act Classify AI Systems?

The EU AI Act structures obligations around four risk levels:

unacceptable risk, high risk, limited risk, and minimal risk, depending on the project, business purpose, data processed, and the organization’s role.

AI Maturity Assessment: A Simple Framework for Risk Control

What is evaluated?

Bureau Veritas assesses generative AI systems used in conversational agents (e.g., chatbots), considering:

- data used

- models

- processes

- internal competencies

- associated governance

Objective: manage Responsible AI risks and ensure safe and compliant use.

A structured analysis

A unique evaluation based on 8 standardized pillars from the EU AI Act, covering regulatory obligations and data‑management good practices:

- explainability

- controllability

- transparency

- safety

- fairness

- governance

- confidentiality & information security

- robustness & accuracy

This evaluation is refined using international standards: NIST, ISO 42001, ISO/IEC 24027, etc.

A complete audit process

- Pre‑audit: applicability review of the AI system

- Automated documentation audit: process and documentation analysis

- On‑site field audit: evaluation with your operational teams

- Automated direct tests: direct invocation of the AI system to test critical properties

Deliverable: AI Maturity Audit Report

- Detailed report strengthening stakeholder trust

- Actionable recommendations to improve compliance

- Evaluation adapted to the AI system’s risk level in line with the AI Act classification

AWS and AIRI — AI Technology for Efficient Auditing

AWS provides Bureau Veritas with technological capabilities powering AIRI (AI Risk Intelligence) at the heart of the responsible AI maturity audit process.

This process automates the analysis of large amounts of data, making the audit more efficient and faster while keeping humans at the center.

The delivery model relies on AWS infrastructure to evaluate the eight standardized pillars: explainability, fairness, governance, confidentiality and security, safety, controllability, robustness and accuracy, and transparency.

Benefits of the Responsible AI Maturity Audit

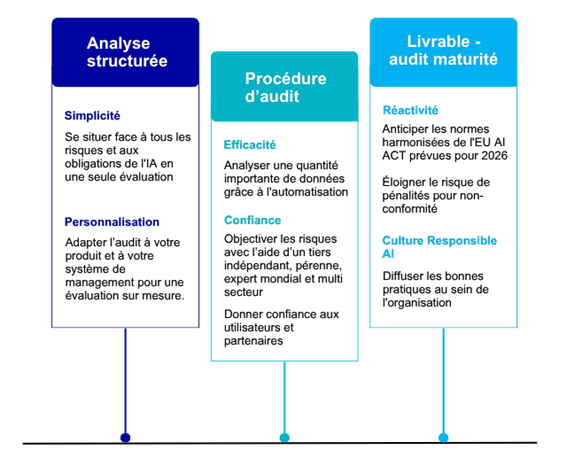

Three key benefit areas:

- Simplicity and customization through structured analysis

- Efficiency and trust in the audit process

- Responsiveness and Responsible AI culture supported by the audit deliverable

Why Choose Bureau Veritas?

An innovative and comprehensive solution:

- 100% coverage of EU AI Act obligations for both deployers and providers of generative AI systems

- Integration of international best practices for a reliable and recognized assessment

- Recognized expertise in risk and quality management systems

- Advanced automation with proprietary IT tools including a “Judge LLM”

- Guaranteed human oversight: confirmed auditors interpret the data

Frequently Asked Questions

-

What is AI data maturity assessment?

A systematic analysis of the quality, governance, and readiness of the data used to train and power AI systems. It examines dimensions such as completeness, accuracy, relevance, accessibility, and regulatory compliance.

It identifies gaps, potential biases, and risks linked to data quality, and guides improvements to ensure robust AI model performance and reliability. -

What is Responsible AI?

Responsible AI refers to principles, practices, and governance aimed at developing and deploying AI systems ethically, transparently, and in accordance with societal values. It relies on transparency, fairness, accountability, data security, and regulatory compliance.

-

What are the main obligations of the EU AI Act?

Obligations vary by risk level:

- Bans on unacceptable risk systems

- Strict requirements for high‑risk systems (conformity assessment, technical documentation, human oversight...)

- Transparency obligations for limited‑risk systems

-

What are the pillars of Responsible AI?

Eight pillars structure the development, deployment, and governance of AI systems:

- Explainability

- Controllability

- Transparency

- Safety

- Fairness

- Governance

- Confidentiality & information security

- Robustness & accuracy

-

How do I know if my system is high risk?

A system is high risk if used in critical domains such as recruitment, education, healthcare, or public services. You can quickly evaluate this using the EU AI Act Compliance Checker.

Bureau Veritas can support this regulatory qualification process. -

What if my AI system is not high risk?

Even if not high risk, it remains essential to manage AI‑related risks. Insufficient governance can hinder adoption and damage reputation.

The Responsible AI Maturity Audit helps organizations evaluate and control AI risks proactively. -

How long does an AI maturity audit take?

Duration depends on:

- complexity and number of use cases

- associated risk level

- your organization’s role (provider or deployer)

On average:

A standard initial audit requires around 5 days of effort from your teams and 20 days of work from our teams.

Subsequent audits are faster thanks to automation and increased maturity. -

How to prepare for an AI maturity audit?

Coordinate with the following roles:

- AI use‑case Product Owner

- Business representative

- AI governance lead

- IT, cybersecurity, and data protection representative

- If possible: quality and risk management lead

Prepare documentation demonstrating risk control and AI compliance.

-

What are the 3 levers of Responsible AI?

- Understand & scope

Objective: Identify your role (supplier / user), your AI use cases and your Al Act obligations

Our support:

› Al Act applicability diagnosis

› Classification of systems and use cases

› Regulatory framing and risk prioritization - Assess & control AI risks

measure and test the risks and gaps related to AI and Al Act systems

Our support:

› Al Act Maturity Audit

› AI risk analysis & threat modeling

> Assessment of the robustness and explainability of models

› Technical and security tests (including generative AI) - Deploy trustworthy AI

Objective: Ensure sustainable compliance and secure adoption of the IAA

Our support:

› Support for the implementation of the Al Act (governance, processes, documentation, etc.)

› Definition of best practices and controls

› Awareness and training of management and operational teams

- Understand & scope

-

What are the key steps toward EU AI Act compliance?

- Establish regulatory foundations

› Inventory of AI Systems

› Analysis of AI Systems

> Applicability of the Al Act (classification, roles, obligations)

Objective: to clearly understand one's AI scope in order to act with confidence. - Understand and build compliance

› Measuring compliance gaps

> Defining a prioritized action plan> Documenting compliance (register, logs, technical documentation)

› Deployment according to the regulatory

schedule Objective: structuring actions to integrate the Al Act in a fluid and controlled manner. - Demonstrate & maintain conformity

› Demonstrate compliance officially

› Post-market monitoring and regular updates

› Periodic reassessment of compliance

Objective: to guarantee sustainable compliance that follows the evolution of uses.

- Establish regulatory foundations

-

Why is it important to verify AI generated information?

To ensure reliability and quality in professional decision‑making.

AI can generate plausible‑sounding but incorrect answers ("hallucinations"). Unverified outputs can compromise regulatory compliance, harm reputation, and create legal liabilities.

Bureau Veritas helps control these risks through maturity audits.